Surveys

Validate findings with quantitative data

Qualitative data from usability tests and in-depth customer interviews help build robust mental models of our customers and strengthening our intuition about them, which leads to more informed product decisions. However, sometimes we also need to statistically validate certain findings through analytics and surveys. In other words, qualitative data helps us understand why people behave a certain way but we also need to know what the larger market are currently doing or thinking.

One of our favourite qualitative research methods is surveys.

When surveys are useful

Surveys help to validate a question quantitatively. If we know what we need to ask and we know who to ask, it’s a quick and easy way to get answers.

Surveys work well with qualitative research and can be run before or after customer interviews. If there is no analytics about who your customers are, surveys can be a great way to get a very good understanding of their behavioural and demographic dimensions before investigating the why behind the answers. They can also be used after a round of qualitative research or design sprint to validate hypotheses that were created along the way.

Examples of surveys

Gathering data about a group of people

If you need to know demographic information (like age, gender, income, and location) and easy-to-answer behavioural information (for example, which bank they are with, how often they go on holiday, and if they have a family doctor).

Open-ended feedback after experience feedback

This can be done as part of a qualitative research study; for example, we send out a survey to all who attended an innovation conference, asking them a few open-ended questions as well as the metrics.

Customer satisfaction metrics

It can be a metric-only survey that goes out to all customers after an experience. For example, a NPS or CSAT surveys. If we had to recommend one of those, we would prefer CSAT over NPS, but it’s important to remember that these only help to validate that question to people who answered the survey. If a CSAT is low, it can’t tell you why it’s low, only that people gave you a low CSAT score. It now requires investigation into why.

When are surveys no that helpful

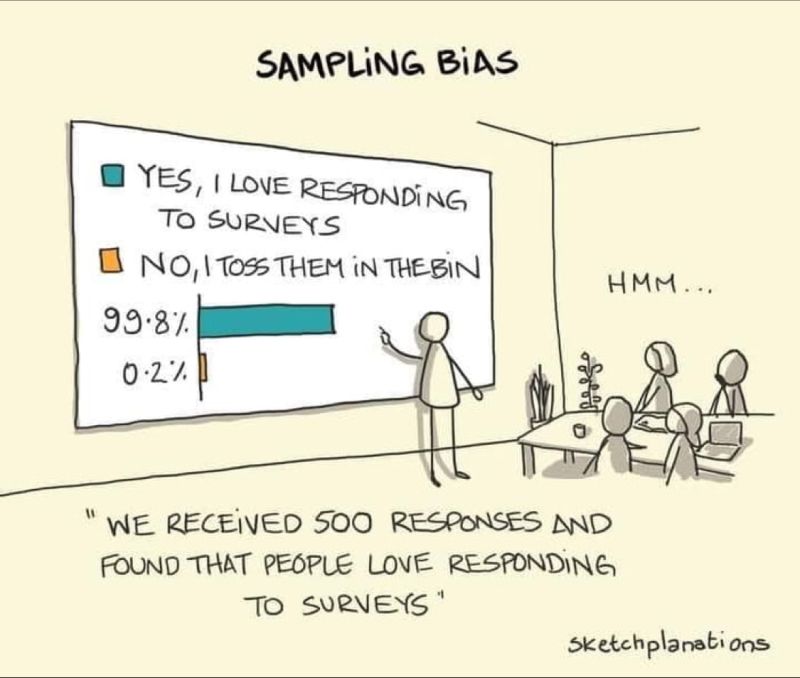

Sampling bias

There are a few things to know when using surveys to gather customer isnight. Firstly, we need to aks “who are answering the survey?” If we send out the survey to 1000 people that are a good representation of our target market we may only get 100 respondes. We need to confirm that group is still a good representation and not just people that answer surveys.

Sketchplanation and Herman Singh

In the pre-Covid world, usability tests were either run at a hired venue or your offices, provided you had two rooms available and a good internet connection. Since lockdown has forced us to conduct our research remotely, we have become rather good at it and still prefer it now that lockdown has lifted. In the beginning of lockdown, we wrote a blog post investigating at all the pros and cons of remote testing.

In the few instances that we need to run an in-person test, we will discuss a venue and logistics in the kick-off session.

For our remote session, we stream the test over a secure Youtube or Vimeo link so your team can watch them live or we can record them to watch later. We edit the recorded videos slightly to remove the parts where we are getting set up. There is a lot of, “Can you hear me?” in remote testing.